multi-storied #7: What will it take?

I am sure I am not alone in having wondered again and again over the past decade, over the tenures of various governments in India, the UK and the US: What will it take? What item of their agenda—what act of malevolence, what priority or lack thereof—will provoke a universal, bipartisan denunciation? What aspect of public life is valuable enough to everyone on the political spectrum that an assault upon it would arouse censure in unison?

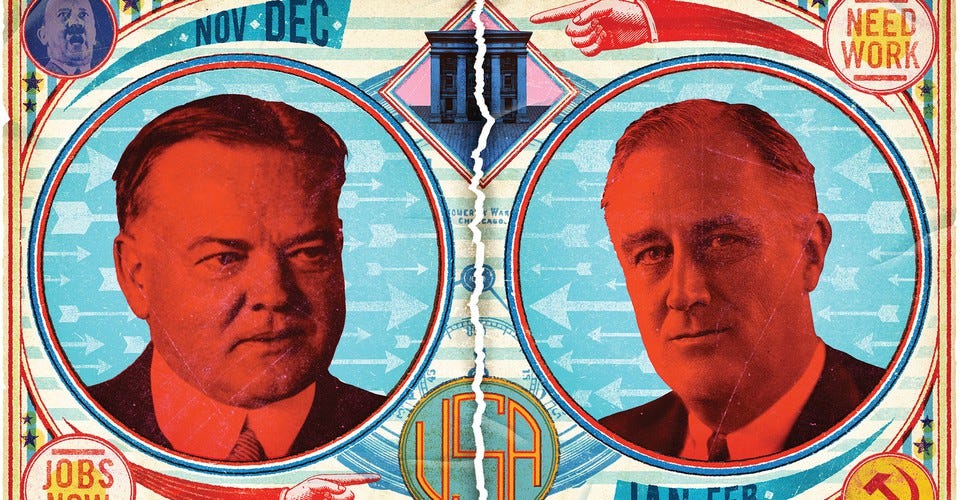

Last November, by which I mean 2019, a team at HuffPost and ProPublica figured: maybe data? Trustworthy public statistics are surely important to everyone—you need to know the state of the country before you can work out what you want to change about it. So this team started to assemble as complete a reckoner as possible of the kind of damage that the Trump administration was wreaking upon the collection and analysis of federal statistics. It was a full year of work by these reporters, and the project’s results were published last week. I was associated with this project in a small way: I talked to statisticians, data scientists, census officials, and civil servants about the view from the inside, to put together a brief introductory essay to the project. If you’re in the US and reading this on the eve of the election, it’s worth at least skimming the report, to get a horrified sense of how Trump has gutted the very lifeblood of policy-making.

The example that data scientists quoted to me most to illustrate the importance of federal statistics concerned the levels of lead in gasoline and paint. Lobbies had managed to strike back against any attempts to regulate these levels, until a routine government survey established conclusively that people had dangerous levels of lead in their blood. This is why data matters, even beyond the election:

The quality of data is hard to separate from the quality of governance. The state’s machinery works only if the data it is using to make its decisions is sound and fair. After all, a nation is an act of invention—an abstract, uncanny idea made real every day by a million concrete things that citizens decide they want for themselves. Food that is edible. Streets that are safe to walk. Air that is clean. Workplaces that treat people well. It is in the measures of these qualities—how edible? how safe? how clean? how well?—that a nation shapes itself. Four more years of data decay will fatally weaken the government and its capacity to help its people. The act of invention falters. The lead stays in the gasoline.

The ruin of data collection is terrible to behold. Numerous, numerous environmental statisics, of course. Orphaned or underfunded departments. No data on ICE enforcement raids or deaths in custody. No data for deaths in correctional institutions. A corrupted census process. Corrupted Covid statistics. One agency, the Economic Research Service, which collects agricultural data, was moved from Washington DC to Kansas City, Missouri—in the knowledge that scientists and statisticians who had built their lives in DC for years would be unwilling to uproot themselves suddenly. Layoffs by coercion. A former ERS administrator told me that the agency probably enraged the administration because some of its research results were “uncomfortable or inconvenient.” If you don’t like the numbers, get rid of them.

Doubtless the same thing is happening in India. We know, already, of the government’s habit of rejigging GDP numbers to prettify its economic growth numbers. I bet there’s more. And so I wonder: Can this be a point of bipartisan agreement? That governments have no business wrecking the data that tells them—and more importantly us—what is going on with our countries? And that governments caught doing this in such egregious ways are failing us and our collective future?

PS. If you’re reading this and have insider-y tips about other government data being destroyed, whether in the US or the UK or India—email me!

“A Dominant Character” has made it into a prize longlist: the Tata Literature Live! Non Fiction Book of the Year. The full list includes some very fine books by very fine writers.

Which is an opportune moment to remind you, of course, that the book continues to be easily available, in time for Christmas gift purchasing season!

In India

In the US

And in the UK, on The Bookshop, the exciting, newly launched web site that sells books from across local, indie bookstores. (And also on Amazon, if you happen to be loyal.)

After the publication of the HuffPost piece, a couple of people wrote in asking if there were any good books they might read, locating statistics and data within the larger history of policy-making. I read three books, recommended to me by Katherine Wallman, the former Chief Statistician of the United States, and I can do no better than pass those titles on:

“The American Census: A Social History” by Margo Anderson

“The Politics of Numbers,” ed. William Alonso and Paul Starr

“Revolution in US Government Statistics, 1927-1976” by Joseph Duncan and William Shelton

An outtake from this data essay, narrated to me by Charles Rothwell, director of the National Center for Health Statistics:

Health data is considered so important that, unlike with many other systems, the federal government pays the states to collect and pass statistics on. “We pay $24 million or so for all the 50 states,” Rothwell said. But the states had their own reporting processes. When Rothwell became the head of vital statistics many years ago, he started pushing states to automate the reporting of mortality data: getting physicians in hospitals to feed the information into electronic systems that would then aggregate it for federal use.

“There was great scepticism whether this could be done,” Rothwell said, “and if physicians would comply with this.” The NCHS had to sweeten the pot—paying over and above its contracted fee to get states to install these electronic systems. (That software is coded in Cobol, and is in desperate need of change.) Over 20-odd years, states slowly switched over, but it was like pushing a boulder up a mountain. When I spoke to Rothwell last year, four states still hadn’t transitioned to electronic systems.

The lethargy of this transition through the 2000s had a fatal side effect. Without the electronic reporting systems, the federal government would get mortality numbers months and months later. As a result, analysing those numbers sometimes grew cumbersome and complicated. Which is why, Rothwell said, the federal government missed “all the drug overdose deaths, the suicides, the medical examiner events” that pointed to the growing opioid abuse crisis in the US. The state of West Virginia, where the economists Angus Deaton and Anne Case eventually unearthed the epidemic of opioid abuse around 2015, did not at the time have an electronic system to register mortality data. Had that system been around, Rothwell said, perhaps the government could have spotted the patterns earlier. Lives could have been saved.

Such is the importance of timely, accurate data.